1911 Encyclopædia Britannica/Alloys

ALLOYS (through the Fr. aloyer, from Lat. alligare, to combine), a term generally applied to the intimate mixtures obtained by melting together two or more metals, and allowing the mass to solidify. It may conveniently be extended to similar mixtures of sulphur and selenium or tellurium, of bismuth and sulphur, of copper and cuprous oxide, and of iron and carbon, in fact to all cases in which substances can be made to mix in varying proportions without very marked indication of chemical action. The term “alloy” does not necessarily imply obedience to the laws of definite and multiple proportion or even uniformity throughout the material; but some alloys are homogeneous and some are chemical compounds. In what follows we shall confine our attention principally to metallic alloys.

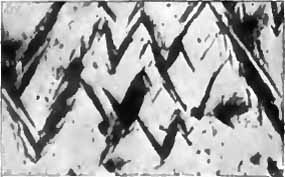

If we melt copper and add to it about 30% of zinc, or 20% of tin, we obtain uniform liquids which when solidified are the well-known substances brass and bell-metal. These substances are for all practical purposes new metals. The difference in the appearance of brass and copper is familiar to everyone; brass is also much harder than copper and much more suitable for being turned in a lathe. Similarly, bell-metal is harder, more sonorous and more brittle than either of its components. It is almost impossible by mechanical means to detect the separate ingredients in such an alloy; we may cut or file or polish it without discovering any lack of homogeneousness. But it is not permissible to call brass a chemical compound, for we can largely alter its percentage composition without the substance losing the properties characteristic of brass; the properties change more or less continuously, the colour, for example, becoming redder with decrease in the percentage of zinc, and a paler yellow when there is more zinc. The possibility of continuously varying the percentage composition suggests analogy between an alloy and a solution, and A. Matthiessen (Phil. Trans., 1860) applied the term “solidified solutions” to alloys. Regarded as descriptive of the genesis of an alloy from a uniform liquid containing two or more metals, the term is not incorrect, and it may have acted as a signpost towards profitable methods of research. But modern work has shown that, although alloys sometimes contain solid solutions, the solid alloy as a whole is often far more like a conglomerate rock than a uniform solution. In fact the uniformity of brass and bell-metal is only superficial; if we adopt the methods described in the article Metallography, and if, after polishing a plane face on a bit of gun-metal, we etch away the surface layer and examine the new surface with a lens or a microscope, we find a complex pattern of at least two materials. Fig. 1 (Plate) is from a photograph of a bronze containing 23·3% by weight of tin. The acid used to etch the surface has darkened the parts richest in copper, while those richest in tin remained white. The two ingredients revealed by this process are not pure copper and pure tin, but each material contains both metals. In this case the white tin-rich portions are themselves a complex that can be resolved into two substances by a higher magnification. The majority of alloys, when examined thus, prove to be complexes of two or more materials, and the patterns showing the distribution of these materials throughout the alloy are of a most varied character. It is certain that the structure existing in the alloy is closely connected with the mechanical properties, such as hardness, toughness, rigidity, and so on, that make particular alloys valuable in the arts, and many efforts have been made to trace this connexion. These efforts have, in some cases, been very successful; for example, in the case of steel, which is an alloy of iron and carbon, a microscopical examination gives valuable information concerning the suitability of a sample of steel for special purposes.

|

|

|

||

| ALLOYS. | ALLOYS. | ALLOYS. | ||

|

Fig. 1.—(Heycock & Neville, Phil. Trans.) Bronze containing 23.3% of tin. Slowly cooled. Magnified 18 diameters. Dark parts are rich in copper, light parts in tin. |

Fig. 2.—(Ewing & Rosenhain, Phil. Trans.) Lead-tin eutectic. Magnified 750 diameters. |

Fig. 3.—(F. Osmond.) Silver-copper [copper=15%, silver=85%] reheated to purple colour. Magnified 600 diameters. |

||

|

|

|

||

|

ALLOYS. |

GUN-MAKING. |

GUN-MAKING. |

||

|

Fig. 4.—(Heycock & Neville, Phil. Trans.) Copper-tin [tin 27.7%] chilled at 731° C. before complete solidification. Magnified 18 diameters. Blacks rich, whites less rich in copper. |

Fig. 5.—Gun steel, C.=0.30%. From top of ingot as cast, magnified 29 diameters. Whites, ferrite; blacks, carbide. |

Fig. 6.—Gun steel, C.=0.30%. From bottom of ingot as cast, magnified 29 diameters. Whites, ferrite; blacks, carbide. |

||

|

|

|

||

| GUN-MAKING. | GUN-MAKING. | GUN-MAKING. | ||

|

Fig. 7.—Gun steel, C.=0.30%. Top of ingot, forged and annealed, magnified 29 diameters. Whites, ferrite; blacks, carbide. |

Fig. 8.—Gun steel, C.=0.30%. Bottom of ingot, forged and annealed, magnified 29 diameters. Whites, ferrite; blacks, carbide. |

Fig. 9.—Gun steel, C.=0.30%. Forged and annealed, magnified 1000 diameters, showing pearlite. |

||

|

|

|

||

|

GUN-MAKING. |

IRON AND STEEL. |

IRON AND STEEL. |

||

|

Fig. 10.—Gun steel, C. = 0.30%. Oil hardened and annealed, magnified 50 diameters. |

Fig. 11.—(Osmond.) Pearlite, steel (carbon about 1%) forged and annealed at 800° C. Magnified 1000 diameters. |

Fig. 12.—(Stoughton.) Meshes of pearlite in a network of ferrite, from hypo-eutectoid steel. Magnified 250 diameters. |

||

|

|

|

||

| IRON AND STEEL. | IRON AND STEEL. | IRON AND STEEL. | ||

|

Fig. 13.—(Stoughton.) Meshes of pearlite in a network of cementite from hyper-eutectoid steel. Magnified 250 diameters. |

Fig. 14.—(Osmond & Cartaud.) Martensite. Magnified 250 diameters. |

Fig. 15.—(Osmond.) Martensite (black) in austensite (white). Steel (carbon about 1.5%) quenched at 1050° C. in ice-cold water. Magnified 250 diameters. |

||

|

PHOTOMICROGRAPHS OF ALLOYS AND METALS. (See Articles Metallography, Alloys, Gun-making, Iron and Steel.) | ||||

Mixture by fusion is the general method of producing an alloy, but it is not the only method possible. It would seem, indeed, that any process by which the particles of two metals are intimately mingled and brought into close contact, so that diffusion of one metal into the other can take place, is likely to result in the formation of an alloy. For example, if vapours of the volatile metals cadmium, zinc and magnesium are allowed to act on platinum or palladium, alloys are produced. The methods of manufacture of steel by cementation, case-hardening and the Harvey process are important operations which appear to depend on the diffusion of the carburetting material into the solid metal. When a solution of silver nitrate is poured on to metallic mercury, the mercury replaces the silver in the solution, forming nitrate of mercury, and the silver is precipitated, it does not, however, appear as pure metallic silver, but in the form of crystalline needles of an alloy of silver and mercury. F. B. Mylius and O. Fromm have shown that alloys may be precipitated from dilute solutions by zinc, cadmium, tin, lead and copper. Thus a strip of zinc plunged into a solution of silver sulphate, containing not more than 0.03 gramme of silver in the litre, becomes covered with a flocculent precipitate which is a true alloy of silver and zinc, and in the same way, when copper is precipitated from its sulphate by zinc, the alloy formed is brass. They have also formed in this way certain alloys of definite composition, such as AuCd3, Cu2Cd, and, more interesting still, Cu3Sn. A very similar fact, that brass may be formed by electrodeposition from a solution containing zinc and copper, has long been known. W. V. Spring has shown that by compressing a finely divided mixture of 15 parts of bismuth, 8 parts of lead, 4 parts of tin and 3 parts of cadmium, an alloy is produced which melts at 100° C., that is, much below the melting-point of any of the four metals. But these methods of forming alloys, although they suggest questions of great interest, cannot receive further discussion here.

Our knowledge of the nature of solid alloys has been much enlarged by a careful study of the process of solidification. Let us suppose that a molten mixture of two substances A and B, which at a sufficiently high temperature form a uniform liquid, and which do not combine to form definite compounds, is slowly cooled until it becomes wholly solid. The phenomena which succeed each other are then very similar, whether A and B are two metals, such as lead and tin or silver and copper, or are a pair of fused salts, or are water and common salt. All these mixtures when solidified may fairly be termed alloys.[1] If a mixture of A and B be melted and then allowed to cool, a thermometer immersed in the mixture will indicate a gradually falling temperature. But when solidification commences, the thermometer will cease to fall, it may even rise slightly, and the temperature will remain almost constant for a short time. This halt in the cooling, due to the heat evolved in the solidification of the first crystals that form in the liquid, is called the freezing-point of the mixture; the freezing-point can generally be observed with considerable accuracy. In the case of a pure substance, and of a certain small class of mixtures, there is no further fall in temperature until the substance has become completely solid, but, in the case of most mixtures, after the freezing-point has been reached the temperature soon begins to fall again, and as the amount of solid increases the temperature becomes lower and lower. There may be other halts in the cooling, both before and after complete solidification, due to evolution of heat in the mixture. These halts in temperature that occur during the cooling of a mixture should be carefully noted, as they give valuable information concerning the physical and chemical changes that are taking place. If we determine the freezing-points of a number of mixtures varying in composition from pure A to pure B, we can plot the freezing-point curve. In such a curve the percentage composition can be plotted horizontally and the temperature of the freezing-point vertically, as in fig. 5. In such a diagram, a point P defines a particular mixture, both as to percentage, composition and temperature; a vertical line through P corresponds to the mixture at all possible temperatures, the point Q being its freezing-point. In the case of two substances which neither form compounds nor dissolve each other in the solid state, the complete freezing-point curve takes the form shown in fig. 5. It consists of two branches AC and BC, which meet in a lowest point C. It will be seen that as we increase the percentage of B from nothing up to that of the mixture C, the freezing-point becomes lower and lower, but that if we further increase the percentage of B in the mixture, the freezing-point rises. This agrees with the well-known fact that the presence of an impurity in a substance depresses its melting-point. The mixture C has a lower freezing or melting point than that of any other mixture; it is called the eutectic mixture. All the mixtures whose composition lies between that of A and C deposit crystals of pure A when they begin to solidify, while mixtures between C and B in composition deposit crystals of pure B. Let us consider a little more closely the solidification of the mixture represented by the vertical line PQRS. As it cools from P to Q the mixture remains wholly liquid, but when the temperature Q is reached there is a halt in the cooling, due to the formation of crystals of A. The cooling soon recommences and these crystals continue to form, but at lower and lower temperatures because the still liquid part is becoming richer in B. This process goes on until the state of the remaining liquid is represented by the point C. Now crystals of B begin to form, simultaneously with the A crystals, and the composition of the remaining liquid does not alter as the solidification progresses. Consequently the temperature does not change and there is another well-marked halt in the cooling, and this halt lasts until the mixture has become wholly solid. The corresponding changes in the case of the mixture TUVW are easily understood—the first halt at U, due to the crystallization of pure B, will probably occur at a different temperature, but the second halt, due to the simultaneous crystallization of A and B, will always occur at the same temperature whatever the composition of the mixture. It is evident that every mixture except the eutectic mixture C will have two halts in its cooling, and that its solidification will take place in two stages. Moreover, the three solids S, D and W will differ in minute structure and therefore, probably, in mechanical properties. All mixtures whose temperature lies above the line ACB are wholly liquid, hence this line is often called the “liquidus”; all mixtures at temperatures below that of the horizontal line through C are wholly solid, hence this line is sometimes called the “solidus,” but in more complex cases the solidus is often curved. At temperatures between the solidus and the liquidus a mixture is partly solid and partly liquid. This general case has been discussed at length because a careful study of it will much facilitate the comprehension of the similar but more complicated cases that occur in the examination of alloys. A great many mixtures of metals have been examined in the above-mentioned way.

Fig. 6 gives the freezing-point diagram for alloys of lead and tin. We see in it exactly the features described above. The two sloping lines cutting at the eutectic point are the freezing-point curves of alloys that, when they begin to solidify, deposit crystals of lead and tin respectively. The horizontal line through the

eutectic point gives the second halt in cooling, due to the simultaneous

formation of lead crystals and tin crystals. In the case

of this pair of metals, or indeed of any metallic alloy, we cannot

see the crystals forming, nor can we easily filter them off and

examine them apart from the liquid, although this has been done

in a few cases. But if we polish the solid alloys, etch them if

Fig. 6

necessary, and examine them microscopically, we shall find that

alloys on the lead side of the diagram consist of comparatively

large crystals of lead embedded in a minute complex, which is

due to the simultaneous crystallization of the two metals during

the solidification at the eutectic temperature. If we examine

alloys on the tin side we shall find large crystals of tin embedded

in the same complex. The eutectic alloy itself, fig. 2 (Plate),

shows the minute complex of the tin-lead eutectic, photographed

by J. A. Ewing and W. Rosenhain,

and fig. 3 (Plate),

photographed by F. Osmond, shows

the structure of a silver-copper

alloy containing considerably

more silver than the eutectic.

Here, the large dark masses are

the silver or silver-rich substance

that crystallized above the eutectic

temperature, and the more minute

black and white complex represents the eutectic. It is not

safe to assume that the two ingredients we see are pure silver

and pure copper; on the contrary, there is reason to think that

the crystals of silver contain some copper uniformly diffused

through them, and vice versa. It is, however, not possible to

detect the copper in the silver by means of the microscope.

This uniform distribution of a solid substance throughout the

mass of another, so as to form a homogeneous material, is called

“solid solution,” and we may say that solid silver can dissolve

copper. Solid solutions are probably very common in alloys,

so that when an alloy of two metals shows two constituents under

the microscope it is never safe to infer, without further evidence,

that these are the two pure metals. Sometimes the whole alloy

is a uniform solid solution. This is the case with the copper-tin

alloys containing less than 9% by weight of tin; a microscopic

examination reveals only one material, a copper-like substance,

the tin having disappeared, being in solution in the copper.

Much information as to the nature of an alloy can be obtained by placing several small ingots of the same alloy in a furnace which is above the melting-point of the alloy, and allowing the temperature to fall slowly and uniformly. We then extract one ingot after another at successively lower temperatures and chill each ingot by dropping it into water or by some other method of very rapid cooling. The chilling stereotypes the structure existing in the ingot at the moment it was withdrawn from the furnace, and we can afterwards study this structure by means of the microscope. We thus learn that the bronzes referred to above, although chemically uniform when solid, are not so when they begin to solidify, but that the liquid deposits crystals richer in copper than itself, and therefore that the residual liquid becomes richer in tin. Consequently, as the final solid is uniform, the crystals formed at first must change in composition at a later stage. We learn also that solid solutions which exist at high temperatures often break up into two materials as they cool; for example, the bronze of fig. 1, which in that figure shows two materials so plainly, if chilled at a somewhat higher temperature but when it was already solid, is found to consist of only one material; it is then a uniform solid solution. The difference between softness and hardness in ordinary steel is due to the permanence of a solid solution of carbon in iron if the steel has been chilled or very rapidly cooled, while if the steel is slowly cooled this solid solution breaks up into a minute complex of two substances which is called pearlite. The pearlite when highly magnified somewhat resembles the lead-tin eutectic of fig. 2 (Plate). In the case of steel (see Iron and Steel) the solid solution is very hard, while the pearlite complex is much softer. In the case of some bronzes, for example that with about 25% of tin, the solid solution is soft, and the complex into which it breaks up by slow cooling is much harder, so that the same process of heating and chilling which hardens steel will soften this bronze.

If we melt an alloy and chill it before it has wholly solidified, we often get evidence of the crystalline character of the solid matter which first forms. Fig. 4 (Plate) is the pattern found in a bronze containing 27·7% of tin when so treated. The dark, regularly oriented crystal skeletons were already solid at the moment of chilling; they are rich in copper. The lighter part surrounding them was liquid before the chill; it is rich in tin. This alloy, if allowed to solidify completely before chilling, turns into a uniform solid solution, and at still lower temperatures the solid solution breaks up into a pearlite complex. The analogy between the breaking up of a solid solution on cooling and the formation of a eutectic is obvious. Iron and phosphorus unite to form a solid solution which breaks up on cooling into a pearlite. Other cases could be quoted, but enough has been said to show the importance of solid solutions and their influence on the mechanical properties of alloys. These uniform solid solutions must not be mistaken for chemical compounds; they can, within limits, vary in composition like an ordinary liquid solution. But the occasional or indeed frequent existence of chemical compounds in alloys has now been placed beyond doubt.

We can sometimes obtain definite compounds in a pure state by the action of appropriate solvents which dissolve the rest of the alloy and do not attack the crystals of the compound. Thus, a number of copper-tin alloys when digested with hydrochloric acid leave the same crystalline residue, which on analysis proves to be the compound Cu3Sn. The bodies SbNa3, BiNa3,

SnNa4, compounds

Fig. 7 of iron and molybdenum and many other substances, have also been isolated in this way. The freezing-point curve sometimes indicates the existence of chemical compounds. The simple type of curve, such as that of lead and tin, fig. 6, consisting of two downward sloping branches meeting in the eutectic point, and that of thallium and tin, the upper curve of fig. 7, certainly give no indication of chemical combination. But the curves are not always so simple as the above. The lower curve of fig. 7 gives the freezing-point curve of mercury and thallium; here A and E are the melting-points of pure mercury and pure thallium, and the branches AB and ED do not cut each other, but cut an intermediate rounded branch BCD. There are thus two eutectic alloys B and D, and the alloys with compositions between B and D have higher melting-points. The summit C of the branch BCD occurs at a percentage exactly corresponding to the formula Hg2Tl. It is probable that all the alloys of compositions between B and D, when they begin to solidify, deposit crystals of the compound; the lower eutectic B probably corresponds to a solid complex of mercury and the compound. The point B is at −60° C., the lowest temperature at which any metallic substance is known to exist in the liquid state. The higher eutectic D may correspond to a complex of solid thallium and the compound; but the possible existence of solid solutions makes further investigation necessary here. The curves of fig. 7 were determined by N. S. Kurnakow and N. A. Puschin. Sometimes a freezing-point curve contains more than one intermediate summit, so that more than one compound is indicated. For example, in the curve for gold-aluminium, ignoring minor singularities, we find two intermediate summits, one at the percentage Au2Al, and another at the percentage AuAl2. Microscopic examination fully confirms the existence of these compounds. The substance AuAl2 is the most remarkable compound of two metals that has so far been discovered; although it contains so much aluminium its melting-point is as high as that of gold. It also possesses a splendid purple colour, more remarkable than that of any other metal or alloy.

Many other inter-metallic compounds have been indicated by

summits in freezing-point curves. For example, the system

sodium-mercury has a remarkable summit at the composition

NaHg2. This compound melts at 350° C., a temperature far

above the melting-point of either sodium or mercury. In the

system potassium-mercury, the compound KHg2

is similarly

indicated. In the curve for sodium-cadmium, the compound

NaCd2 is plainly shown. These three examples are taken from

the work of N. S. Kurnakow. Various compounds of the alkali

metals with bismuth, antimony, tin and lead have been prepared

in a pure state. Such are the compounds SbNa3, BiNa3, PbNa2,

SnNa4. Of these, the first three are well indicated on the

freezing-point curves. The intermediate summits occurring

in the freezing-point curves of alloys are usually rounded; this

feature is believed to be due to the partial decomposition of the

compound which takes place when it melts. The formulae of

the group of substances last mentioned are in harmony with

the ordinary views of chemists as to valency, but the formulae

NaHg2, NaCd2, NaTl2, AuAl2 are more surprising. They indicate

the great gaps in our present knowledge of the subject of valency.

We must not take it for granted, when the freezing-point curve

gives no indication of the compound, that the compound does

not exist in the solid alloy. For example, the compound Cu3Sn

is not indicated in the freezing-point curve, and indeed a liquid

alloy of this percentage does not begin to solidify by the formation

of crystals of Cu3Sn; the liquid solidifies completely to a uniform

solid solution, and only at a lower temperature does this change

into crystals of the compound, the transformation being accompanied

by a considerable evolution of heat. Until recently the

vast subject of inter-metallic compounds has been an unopened

book to chemists. But the subject is now being vigorously

studied, and, apart from its importance as a branch of descriptive

chemistry, it is throwing light, and promises to throw more,

on obscure parts of chemical theory.

The graphical representation of the properties of alloys can be extended so as to record all the changes, thermal and chemical, which the alloy undergoes after, as well as before, solidification, including the formation and breaking up of solid solutions and compounds. For an example of such a diagram, see the Bakerian Lecture, 1903, Phil. Trans., A. 346. The Phase Rule of Willard Gibbs, especially as developed by Bakhuis Roozeboom, is a most useful guide in such investigations.

So far we have been considering alloys containing two metals;

the phenomena they present are by no means simple. But when

three or more metals are present, as is often the case in useful

alloys, the phenomena are much more complicated. With three

component metals the complete diagram giving the variations in

any property must be in three dimensions, although by the use of

Fig. 8

contour lines the essential facts can be represented in a plane

diagram. The following method, depending

on the constancy of the sum of the

perpendiculars from any point on to the

sides of an equilateral triangle, can be

adopted:—Let ABC (fig. 8) be an

equilateral triangle, the angular points

corresponding to the three pure metals A, B, C.

Then the composition of any alloy can be

represented by a point P, so chosen that

the perpendicular Pa on to the side BC

gives the percentage of A in the alloy, and the perpendiculars

Pb and Pc give the percentages of B and C respectively.

Points on the side AB will correspond to binary alloys

containing only A and B, and so on. If now we wish to

represent the variations in some property, such as fusibility,

we determine the freezing-points of a number of alloys

distributed fairly uniformly over the area of the triangle, and,

at each point corresponding to an alloy, we erect an ordinate

at right angles to the plane of the paper and proportional

in length to the freezing temperature of that alloy. We can

then draw a continuous surface through the summits of all

these ordinates, and so obtain a freezing-point surface, or

liquidus; points above this surface will correspond to wholly

liquid alloys. The ternary alloys containing bismuth, tin and

lead have been studied in this way by F. Charpy and by E. S.

Shepherd. We have here a comparatively simple case, as the

metals do not form compounds. The solid alloy consists of

crystals of pure tin in juxtaposition with crystals of almost pure

lead and bismuth, these two metals dissolving each other in solid

solution to the extent of a few per cent only. If now we cut the

freezing-point surface by planes parallel to the base ABC we get

curves giving us all the alloys whose freezing-point is the same;

these isothermals can be projected on to the plane of the triangle

and are seen as dotted lines in fig. 9. The freezing surface, in

this case, consists of three sheets each starting from an angular

point of the surface, that is, from the freezing-point of a pure

metal. The sheets meet in pairs along three lines which

themselves meet in a point. In fig. 9, due to F. Charpy, these lines are

projected on to the plane of the triangle as Ee, E′e and E″e.

The area of the triangle is thus divided into three regions. The

region PbEeE′ contains all the alloys that commence their

solidification by the crystallization of lead; similarly, the other

two regions correspond to the initial crystallization of bismuth

and tin respectively; these areas are the projections of the three

sheets of the freezing-point surface. The points E, E′, E″ are

the eutectics of binary alloys. Alloys represented by points on

Ee, when they begin to solidify, deposit crystals of lead and

bismuth simultaneously; Ee is a eutectic line, as also are E′e and

E″e. The alloy of the point e is the ternary eutectic; it deposits

the three metals simultaneously during the whole period of its

solidification and solidifies at a constant temperature. As the

lines of the surface which correspond to Ee, &c., slope downwards

to their common intersection it follows that the alloy e has the

lowest freezing-point of any mixture of the three metals; this

freezing-point is 96° C., and the alloy e contains about 32% of

lead, 15·5% of tin and 52·5% of bismuth.

It is evident that any other property can be represented by similar diagrams. For example, we can construct the curve of conductivity of alloys of two metals or the surface of conductivity of ternary alloys, and so on for any measurable property.

The electrical conductivity of a metal is often very much decreased by alloying with it even small quantities of another metal. This is so when gold and silver are alloyed with each other, and is true in the case of alloys of copper. When a pure metal is cooled to a very low temperature its electrical conductivity is greatly increased, but this is not the case with an alloy. Lord Rayleigh has pointed out that the difference may arise from the heterogeneity of alloys. When a current is passed through a solid alloy, a series of Peltier effects, proportional to the current, are set up between the particles of the different metals, and these create an opposing electromotive force which is indistinguishable experimentally from a resistance. If the alloy were a true chemical compound the counteracting electromotive force should not occur; experiments in this direction are much needed.Sir William Chandler Roberts-Austen has shown that in the case of molten alloys the conduction of electricity is apparently metallic, no transfer of matter attending the passage of the current. A group of bodies may, however, be yet discovered between alloys and electrolytes in which evidence may be found of some gradual change from wholly metallic to electrolytic conduction. A. P. Laurie has determined the electromotive force of a series of copper-zinc, copper-tin and gold-tin alloys, and as the result of his experiments he points to the existence of definite compounds. Explosive alloys have been formed by H. St Claire Deville and H. J. Debray in the case of rhodium, iridium and ruthenium, which evolve heat when they are dissolved in zinc. When the solution of the rhodium-zinc alloy is treated with hydrochloric acid, a residue is left which undergoes a change with explosive violence if it be heated in vacuo to 400°. The alloy is then insoluble in “aqua regia.” The metals have therefore passed into an insoluble form by a comparatively slight elevation of temperature.

Metals do not appear to have been studied from the point of view of surfusion until 1880, when A. D. van Riemsdijk showed that gold and silver would both pass below their actual freezing-points without becoming solid. Roberts-Austen pointed out that surfusion might be easily measured in metals and in alloys by the sensitive method of recording pyrometry Surfusion. perfected by him. He also showed that the crossing of curves of solubility, which had already been observed by H. le Chatelier and by A. C. A. Dahms in the case of salts, could be measured in the lead-tin alloys. The investigation of the mutual relations of partially miscible liquids, due to P. Alexejew, D. P. Konovalow, and to P. E. Duclaux, was extended to alloys by Alder Wright. The addition of a third metal will sometimes render the mixture of two other metals homogeneous. C. T. Heycock and F. H. Neville proved that when one metal is alloyed with a small quantity of some other metal, the solidification obeys the law of F. M. Raoult. Their experiments, although not conclusive, appear to indicate that the molecule of a metal when in dilute solution often consists of one atom. There are, however, numerous exceptions to this rule. In the cases of aluminium dissolved in tin and of mercury or bismuth in lead, it is at least probable that the molecules in solution are Al2, Hg2 and Bi2 respectively, while tin in lead appears to form a molecule of the type Sn4.

Since 1875 increased attention has been devoted to the applications of the rarer metals. Thus nickel, which was formerly used in the manufacture of “German silver” as a substitute for silver, is now widely employed in naval construction and in the manufacture of steel armourplate and projectiles. Alloyed with copper, it is used Industrial applications. for the envelopes of bullets. A nickel steel containing 36% of nickel has the property of retaining an almost constant volume when heated or cooled through a considerable range of temperature; it is therefore useful for the construction of pendulums and for measures of length. Another steel containing 45% of nickel has, like platinum, the same coefficient of expansion as glass. It can therefore be employed, instead of that costly metal, in the construction of incandescent lamps where a wire has to be fused into the glass to establish electric connexion between the inside and the outside of the bulb. Manganese not only forms with iron several alloys of great interest, but alloyed with copper it is used for electrical purposes, as an alloy can thus be obtained with an electrical resistance that does not alter with change of temperature; this alloy, called manganin, is used in the construction of resistance-boxes. Chromium also, in comparatively small quantities, is taking its place as a constituent of steel axles and tires, and in the manufacture of tool-steel. Steels containing as much as 12% of tungsten are now used as a material for tools intended for turning and planing iron and steel. The peculiarity of these steels is that no quenching or tempering is required. They are normally hard and remain so, even at a faint red heat; much deeper cuts can therefore be taken at a high speed without blunting the tool. Vanadium, molybdenum and titanium may be expected soon to play an important part in the constitution of steel. Titanium is alloyed in small quantities with aluminium for use in naval architecture. Aluminium, when alloyed with a few per cent of magnesium, gains greatly in rigidity while remaining very light; this alloy, under the name of magnalium, is coming into use for small articles in which lightness and rigidity have to be combined. One of the most interesting amongst recent alloys is Conrad Heusler’s alloy of copper, aluminium and manganese, which possesses magnetic properties far in excess of those of the constituent metals.

The importance is now widely recognized of considering the mechanical properties of alloys in connexion with the freezing-point curves to which reference has already been made, but the subject is a very complicated one, and all that need be said here, is that when considered in relation to their melting-points the pure metals are consistently weaker than alloys. The presence in an alloy of a eutectic which solidifies at a much lower temperature than the main mass, implies a great reduction in tenacity, especially if it is to be used above the ordinary temperature as in the case of pipes conveying super-heated steam. It has also been stated that alloys of metals with similar melting-points have higher tenacity when the atomic volumes of the constituent metals differ than when they are nearly the same.

References.—Alloys have formed a subject of reports to several scientific societies. Sir W. C. Roberts-Austen’s six Reports (1891 to 1904) to the Alloys Research Committee of the Institution of Mechanical Engineers, London, the last report being concluded by William Gowland; the Cantor Lectures on Alloys delivered at the Society of Arts and the Contribution à l’étude des alliages (1901), published by the Société d’encouragement pour l’industrie nationale under the direction of the Commission des alliages (1896–1900), should be consulted. The theoretical aspect is discussed in Léon Guillet’s Étude théorique des alliages métalliques (1904). W. T. Brannt’s The Metallic Alloys (1896); Roberts-Austen’s Introduction to the Study of Metallurgy (1902); and R. G. Thurston’s Materials of Engineering, should be consulted for the more practical details.

Recent progress is reported in the scientific periodicals, especially in The Iron and Steel Metallurgist, formerly The Metallographist (Boston, Mass.), and Metallurgie (Halle). Important memoirs by Ewing and Rosenhain, and by C. T. Heycock and F. H. Neville in the Philosophical Transactions, by N. S. Kurnakow in the Zeitschrift für anorganische Chemie, and by E. S. Shepherd in the Journal of Physical Chemistry, may also be consulted. (W. C. R.-A.; F. H. Ne.)